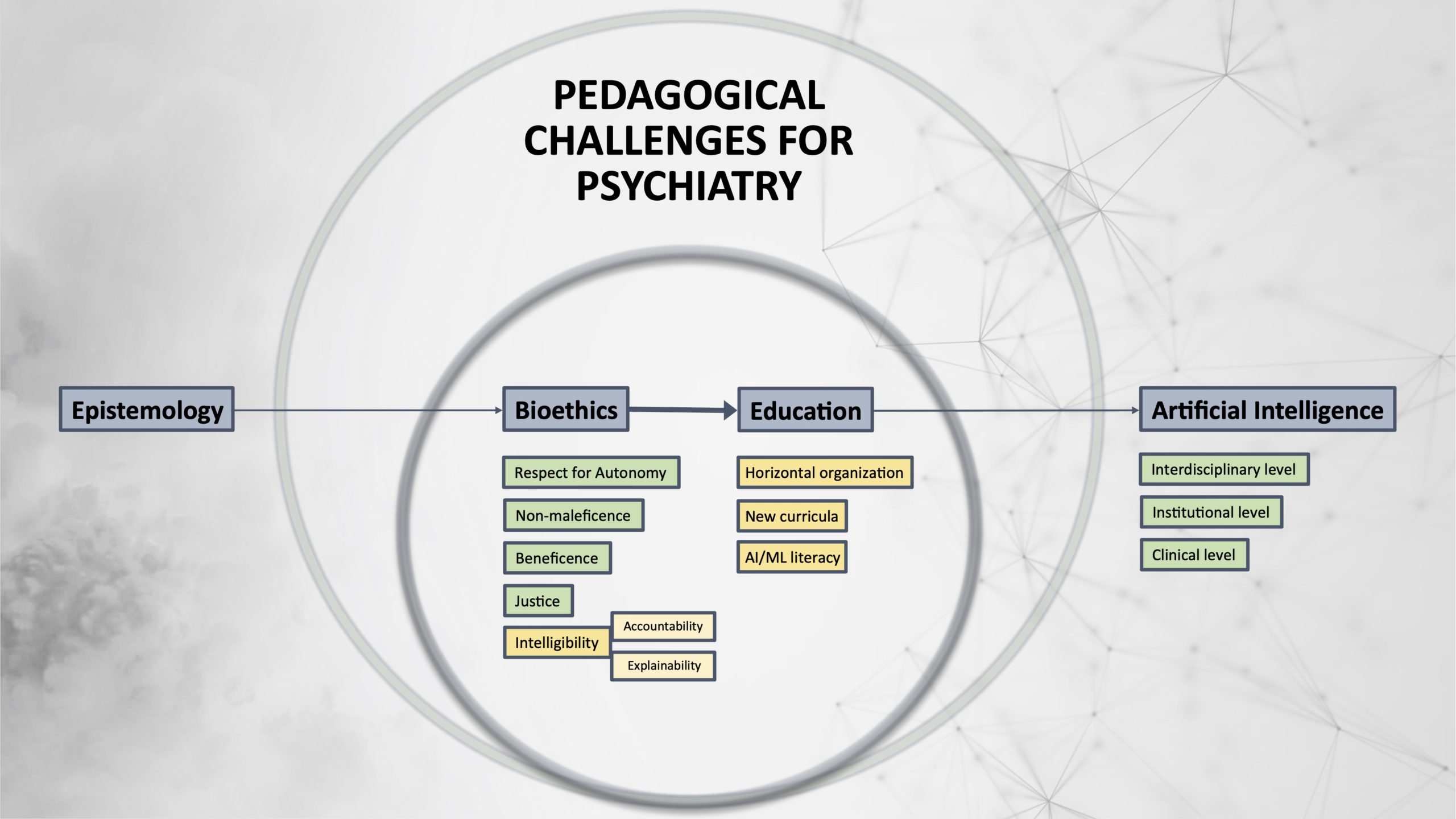

From machine learning to student learning: pedagogical challenges for psychiatry

Abstract

We agree with Georg Starke et al. (Starke et al. 2020): despite the current lack of direct clinical applications, artificial intelligence (AI) will undeniably transform the future of psychiatry. AI has led to algorithms that can perform more and more complex tasks by interpreting and learning from data (Dobrev 2012). AI applications in psychiatry are receiving more attention, with a 3-fold increase in the number of PubMed / MEDLINE articles on IA in psychiatry over the past three years (N=567 results). The impact of AI on the entire psychiatric profession is likely to be significant (Torous et al. 2015; Huys, Maia, and Frank 2016; Grisanzio et al. 2018; Brown et al. 2019). These effects will be felt not only through the advent of advanced applications in brain imaging (Starke et al. 2020) but also through the stratification and refinement of our clinical categories, a more profound challenge which “lies in its long-embattled nosology” (Kendler 2016).

These technical challenges are subsumed by ethical ones. In particular, the risk of non-transparency and reductionism in psychiatric practice is a burning issue. Clinical medicine has already developed the overarching ethical principles of respect for autonomy, non-maleficence, beneficence, and justice (Beauchamp and Childress 2001). The need for the principle of Explainability should be added to this list, specifically regarding the issues involved by AI (Floridi et al. 2018). Explainability concerns the understanding of how a given algorithm works (Intelligibility) and who is responsible for the way it works (Accountability). We totally agree with Starke et al. (2020) that Explainability is essential and constitutes a real challenge for future developments in AI. In addition, however, we think that this ethical issue requires dedicated pedagogical training that must be underpinned by a solid epistemological framework.

Leave a Reply